《鄉民大學問EP.36》字幕版|韓院長的突襲!藍綠白委員見“韓國魚”驚呼!謝龍介不敢睡 稱愈晚愈high在忙那椿?葉元之公開立院頭號女戰神是“她”!王世堅自豪這點連韓國瑜也比不上!|NOWnews

NOW影音

更多NOW影音焦點

更多焦點-

名家論壇》邱師儀/眾院通過950億救烏,共和黨內鬥再起

稍微注意美國政治的人應該知道,目前美國眾院的多數是共和黨,議長也是共和黨籍的強生(Mike Johnson),但參院的多數是民主黨,多數黨主席(實質的參院領袖)是民主黨籍的舒默(Chuck Schum

2024-04-20 21:29

-

西非尼日轉向親俄!美國同意撤軍 1億美元打造無人機基地將關閉

美國週五宣布,同意自西非國家尼日撤軍,該地駐軍1000多名美軍將全數離開,耗資超過1億美元打造的無人機基地也將關閉。尼日自去年政變以來,新上台的軍政府轉向親俄,美軍同意撤軍猶如承認在該地受挫。根據《B

2024-04-20 21:20

-

陳亞蘭久違扮花旦索吻!莊凱勛粉墨登場「擔心遭影迷追殺」

推廣歌仔戲不遺餘力的陳亞蘭,與莊凱勛兩位金鐘視帝聯手合作,共演歌仔戲職人劇《勇氣家族》,今(19)日舉辦開播記者會,在劇中飾演夫妻的陳亞蘭及莊凱勛盛重以歌仔戲扮相出場,過去反串男性角色居多的陳亞蘭,久

2024-04-19 18:18

-

北捷中和新蘆線驚傳火警!「行動電源」冒煙起火 750人受影響

今(20)日傍晚5時許,北捷中和新蘆線傳出火警,列車內民眾嚇壞奔逃,最終列車緊急停靠松江南京站。對此,北捷表示,中和新蘆線松江南京站往迴龍方向月台,一部列車內有旅客攜帶的行動電源冒煙,旅客使用列車上配

2024-04-20 20:37

精選專題

要聞

更多要聞-

駁斥幫婆婆喬貸款!徐巧芯秀放貸人員對話截圖 綠議員質疑套招

國民黨立委徐巧芯因大姑夫婦涉嫌詐欺遭牽連,之後又被民進黨北市議員爆料,稱徐巧芯替自己婆婆的貸款「喬利息」。徐巧芯今( 20)日下午公開與台北富邦銀行屏東分行的放貸人員的對話紀錄,強調她在婆婆辦理貸款的

2024-04-20 20:38

-

全台頻跳電!台電總經理引咎請辭 蔣萬安:不是換幾個人就可解決

近期傳出限電危機後又連日發生跳電事件,台電總經理王耀庭今(20)日向全民致歉並請辭,經濟部長王美花則希望能慰留。對此,台北市長蔣萬安表示,這不是換幾個人或是新的部長馬上可以解決。蔣萬安下午參加2024

2024-04-20 18:20

-

王耀庭請辭 張善政:於事無補!錯誤的能源政策不該只讓台電承擔

近日停電事件頻傳,桃園受影響戶數破萬,台電成眾矢之的,各界批評聲音不斷,台電總經理王耀庭今(20)日請辭負責。桃園市長張善政表示,這不是一個台電總經理可以解決的問題,也不該只讓台電承擔。張善政說,不覺

2024-04-20 18:05

-

投柯青年嗆民進黨只會抗中保台 鄭麗君「直球對決」回應

準副閣揆鄭麗君今(20)日出席民進黨舉辦「投資未來世代」青年論壇,與青年、專家學者對話。一名總統票投民眾黨主席柯文哲、政黨票投民進黨的青年質疑,民進黨除了抗中保台還剩什麼?對此,鄭麗君回應,她不是因為

2024-04-20 17:11

新奇

更多新奇-

整塊都是油!百頁豆腐「並非豆腐」 成分比例嚇壞老饕:不敢吃了

「百頁豆腐」是許多外食族常常交手的老朋友,但你知道,它其實「並非真的豆腐」嗎?一位民眾表示,很多人認為百頁豆腐是「豆製品」,當自己得知其熱量跟成分後,有了受騙上當的感覺。對此,營養師們也紛紛提醒,潛藏

2024-04-20 20:19

-

南韓旅客饒河夜市買「2樣水果400元」!網怒轟坑殺 老闆娘回應了

台灣夜市小吃價格再度引發話題!知名南韓YouTuber「韓勾ㄟ金針菇」日前帶著脫北正妹NARA前往台北的饒河夜市,品嘗各種美食,途中兩人光顧一個水果攤,沒想到買了釋迦與蓮霧各一份就要價400元,讓大批

2024-04-20 19:12

-

水煮蛋「最神煮法」!日本政府認證:省超多水跟瓦斯 台灣也適用

水煮蛋「最神煮法」找到了!日前日本政府農林水產省,在社群上發文分享能以最少用水、瓦斯煮出美味水煮蛋的方法,簡單步驟在台灣也適用,更讓數萬日本民眾大讚實用,懊悔沒早點學起來,「之前煮蛋浪費了太多水跟瓦斯

2024-04-20 14:10

-

全美語幼稚園校外教學去資收場 家長氣炸:我小孩不會接觸這工作

孩子是國家未來的主人翁,不少幼稚園(幼兒園)會安排各項體驗活動,探訪警察局、走訪博物館,加深孩子對社會的了解。不過,一名家長近期抱怨,花大錢送孩子讀全美語幼稚園,結果校外教學居然去參觀資源回收場,質疑

2024-04-19 11:32

娛樂

更多娛樂-

樂天女孩張雅涵「開季1個月還沒跳」!原因讓她好無奈:與世隔絕

中華職棒第35年已經開打快要一個月,但樂天桃猿的主場桃園棒球場依舊尚未整修完成,導致球員、啦啦隊樂天女孩至今都沒有回家比賽,引發球迷討論。繼琳妲發聲「好沒球賽感」之後,副隊長張雅涵Kimi也發文感嘆:

2024-04-20 20:19

-

吳姍儒突宣布懷上第二胎!攜老公嗨迎女嬰「親愛又陌生的新寶寶」

吳宗憲女兒Sandy吳姍儒自2023年3月產下兒子「小初一」之後,今(20)日再度宣布懷孕,而且還是女嬰,開心地向大家宣布好消息,直呼:「歡迎妳,我們親愛又陌生的新寶寶。」▲吳姍儒日前低調舉辦寶寶性別

2024-04-20 19:27

-

Energy台北小巨蛋演唱會賣完!「7月28日加場」活動攻略亮點曝光

最殺的唱跳天團始祖Energy的復出演唱會《一觸即發》將在7月27日於台北小巨蛋開唱,門票今(20)日中午一開賣即完售,主辦單位對此宣布加開7月28日場次,搶票時間為4月27日中午11點於拓元售票系統

2024-04-20 18:59

-

李智凱差0.1分錯失奧運資格 《翻滾吧》導演:為45秒奮鬥20年

《翻滾吧!男孩》紀錄片中擔任主演之一的台灣「鞍馬王子」李智凱,昨(19)日晚間在杜哈的體操世界盃以0.1之差屈居亞軍,無緣提早獲得巴黎奧運門票。導演《翻滾吧!男孩》的導演林育賢也發文支持李智凱:「為4

2024-04-20 18:14

運動

更多運動-

臺北台新戰神成軍首年就晉級季後賽 許皓程笑說:好好洗澡、睡覺

臺北台新戰神今(20)日對戰台啤永豐雲豹,團隊命中率不佳,半場打完以53:60落後,於決勝節才找回團隊攻守默契的戰神,雖僅讓對手單節拿下10分,終場仍以96:101敗北,無緣自力晉級,而最終靠著今日T

2024-04-20 21:24

-

中職快評/神助攻潘威倫奪149勝!「餅總」林岳平的錦囊妙計成功

統一獅42歲傳奇投手「嘟嘟」潘威倫,今(20)日完美中繼2.2局0失分,收下生涯第149勝,距離150勝里程僅差1勝,而背後的隱形功臣,絕對是「餅總」林岳平。林岳平「假先發」劇本完美 助攻潘威倫奪勝賽

2024-04-20 20:49

-

陳柏清7.2局無安打破功!燃燒122球奪首勝 台鋼3:0兄弟止7連敗

台鋼雄鷹終於中止近期7連敗!雄鷹左投陳柏清今(20)日先發主投中信兄弟,投出7.2局無安打比賽,直到8局上才被詹子賢擊出安打攻破,但他整場僅被敲1安打無失分,最終台鋼雄鷹3:0完封中信兄弟,總教練「洪

2024-04-20 20:44

-

潘威倫奪生涯149勝!本季初登板2.2局無失分 統一獅7:1富邦悍將

統一獅42歲投手「嘟嘟」潘威倫今(20)日對戰富邦悍將迎來本季初登板,接替先發投手林詔恩,中繼投球2.2局並未失分,最終統一以7:1擊敗富邦悍將,潘威倫奪得本季首勝,也收下生涯第149勝,距離150勝

2024-04-20 20:01

財經生活

更多財經生活-

比冷氣開幾度重要!吹電風扇有「3種狀況」別吹了 台電認超危險

隨著夏天逐漸逼近,最近應該有不少人都能感受到天氣越來越炎熱,也有許多人家裡的電風扇都已經打開來吹,甚至還有人忍不住都先開了冷氣!然而,你知道你家電風扇是否還「健康」嗎?台電粉絲團就特別分享,家中電風扇

2024-04-20 18:34

-

流浪貓身上「背滿沙包」閒逛!獸醫檢查被震撼:牠背了另一個自己

路上的流浪貓有些有好心貓奴會幫忙照料、餵食罐頭,而有些可就沒有這麼的幸運了!大陸近日有一隻流浪貓在路上被發現長得跟其他的浪浪不同,身上疑似掛了好幾個沙包一樣腳步非常的緩慢,經過好心的中途之家志工救援後

2024-04-20 18:30

-

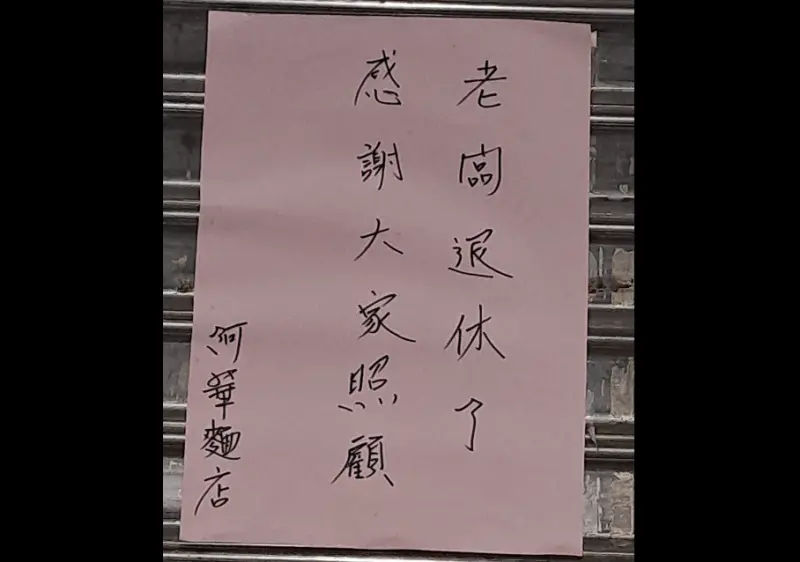

基隆60年「阿華麵店」結束營業!65歲二代老闆退休 在地人超不捨

基隆經營60年的巷弄美食「阿華麵店」公告結束營業,65歲二代老闆表示要退休,兒女也在外地工作,沒有回家接班的打算,讓大批基隆在地人直呼不捨,但同時也讓大批人搞混,以為是知名的「阿華炒麵」要關店,這可就

2024-04-20 16:27

-

台灣泡麵「廠商小心機」抓包!眾吃20年終於發現:幾乎沒一間例外

現在市面上的泡麵口味越來越多元,各家廠商都不斷做出新特色,每當外國客來到台灣遊玩時,也一定都要來碗台灣的泡麵嚐鮮一下,然而從小到大愛吃泡麵的你,是否發現了一項泡麵的「小小盲點」呢?近日就有網友討論「不

2024-04-20 15:04

全球

更多全球-

西非尼日轉向親俄!美國同意撤軍 1億美元打造無人機基地將關閉

美國週五宣布,同意自西非國家尼日撤軍,該地駐軍1000多名美軍將全數離開,耗資超過1億美元打造的無人機基地也將關閉。尼日自去年政變以來,新上台的軍政府轉向親俄,美軍同意撤軍猶如承認在該地受挫。根據《B

2024-04-20 21:20

-

單日2位市長候選人橫死!墨西哥幫派毒梟暴力籠罩 最危險的大選

墨西哥將在今年6月2日舉行大選,然而政治人物遭遇攻擊事件頻傳,19日再度傳出2名市長候選人橫死遇害,讓墨西哥幫派暴力猖獗的問題引發外界關注。綜合外媒報導,墨西哥檢方證實塔茅利巴斯州(Tamaulipa

2024-04-20 19:06

-

沙漠城市杜拜缺乏排水系統!暴雨數天後積水仍未退 只能靠抽水車

阿拉伯聯合大公國16日遭遇暴雨襲擊,12小時內降下超過120毫米的雨量,超越杜拜一年份平均降雨量。暴雨釀成災情,且過了數天後積水仍未消退,杜拜國際機場仍然處於混亂狀態。有外媒指出,這是因為沙漠城市杜拜

2024-04-20 17:46

-

若日本物價持續上漲 日本央行行長:「非常可能」升息

日本央行行長植田和男19日在美國華盛頓發表演講,重申如果排除短期因素後,日本物價上漲的態勢持續,日本央行升息的「可能性會非常高」。根據《共同社》報導,植田和男沒有提及具體時間,但在基於日美利率差的日圓

2024-04-20 17:00